Member-only story

How Should Self-Driving Cars Choose Who Not to Kill?

A popular MIT quiz asked ordinary people to make ethical judgments for machines

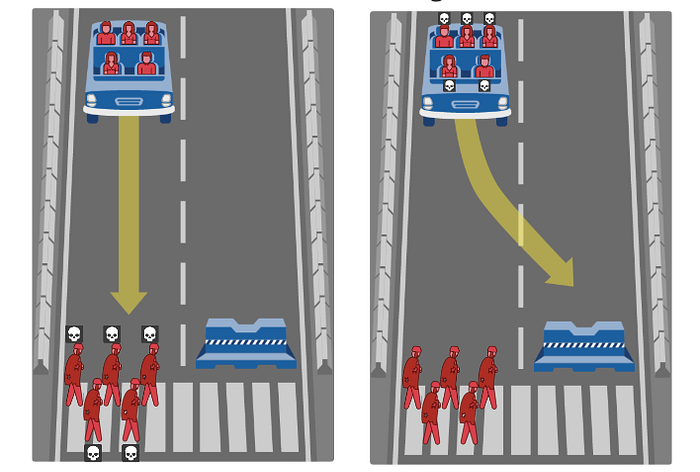

If an automated car had to choose between crashing into a barrier, killing its three female passengers, or running over one child in the street — which call should it make?

When three U.S.-based researchers started thinking about the future of self-driving cars, they wondered how these vehicles should make the tough ethical decisions that humans usually make instinctively. The idea prompted Jean-François Bonnefon, Azim Shariff, and Iyad Rahwan to design an online quiz called The Moral Machine. Would you run over a man or a woman? An adult or a child? A dog or a human? A group or an individual?

By 2069, autonomous vehicles could be the greatest disruptor to transport since the Model T Ford was launched in Detroit in 1908. Although 62 companies hold permits to test self-driving cars in California, the industry remains ethically unprepared. The Moral Machine was designed to give ordinary people some insight into machine ethics — adding their voice to the debate often limited to policy makers, car manufacturers, and philosophers. Medium spoke to Bonnefon and Shariff about what their results tell us about one of the future’s greatest moral dilemmas.

This interview has been edited and condensed for clarity.

Medium: What’s the difference between the way humans and machines make moral decisions?

Jean-François Bonnefon: Moral decisions made by humans are influenced by so many things. People react on the basis of hormones, but they don’t do that in a reasoned way, not when they make decisions really fast. You and I could sit and spend one hour discussing how we would like to react. But there’s no point, we cannot program ourselves to do what we would like to do because we act on instinct. But with machines, we can tell them what we want them to do.

Azim Shariff: Machines have the luxury of deliberation that humans don’t have. With that luxury comes the responsibilities of deliberation as well.