Member-only story

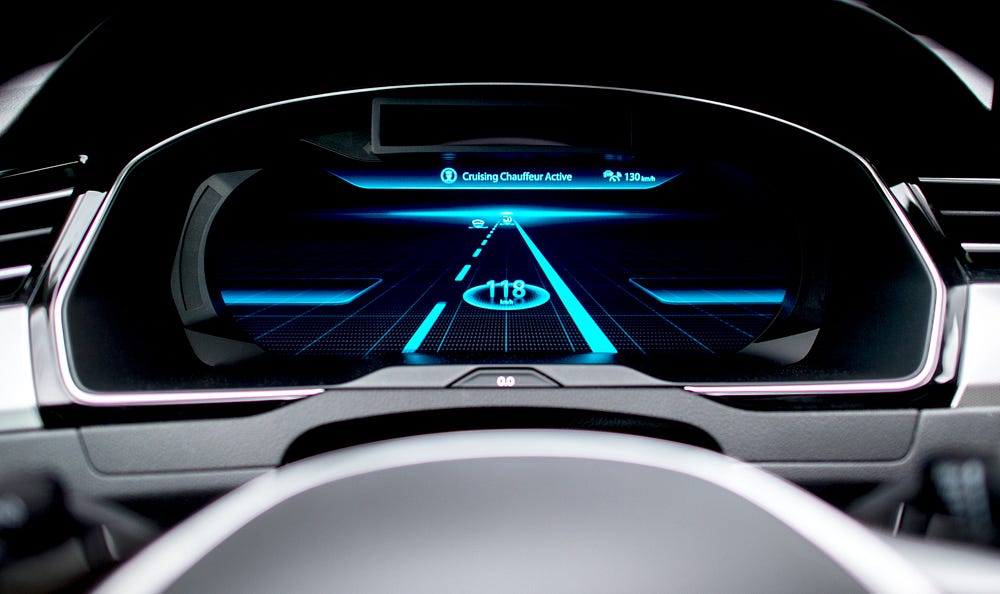

Imagine you’re cruising in your new Tesla, autopilot engaged. Suddenly you feel yourself veer into the other lane, and you grab the wheel just in time to avoid an oncoming car. When you pull over, pulse still racing, and look over the scene, it all seems normal. But upon closer inspection, you notice a series of translucent stickers leading away from the dotted lane divider. And to your Tesla, these stickers represent a non-existent bend in the road that could have killed you.

Imagine you’re cruising in your new Tesla, autopilot engaged. Suddenly you feel yourself veer into the other lane, and you grab the wheel just in time to avoid an oncoming car. When you pull over, pulse still racing, and look over the scene, it all seems normal. But upon closer inspection, you notice a series of translucent stickers leading away from the dotted lane divider. And to your Tesla, these stickers represent a non-existent bend in the road that could have killed you.

In April this year, a research team at the Chinese tech giant Tencent showed that a Tesla Model S in autopilot mode could be tricked into following a bend in the road that didn’t exist simply by adding stickers to the road in a particular pattern. Earlier research in the U.S. had shown that small changes to a stop sign could cause a driverless car to mistakenly perceive it as a speed limit sign. Another study found that by playing tones indecipherable to a person, a malicious attacker could cause an Amazon Echo to order unwanted items.

These discoveries are part of a growing area of study known as adversarial machine learning. As more machines become artificially intelligent, computer scientists are learning that A.I. can be manipulated into perceiving the world in wrong, sometimes dangerous ways. And because these techniques “trick” the system instead of “hacking” it, federal laws and security standards may not protect us from these malicious new behaviors — and the serious consequences they can have.

Machine learning (M.L.) is a major subset of A.I. that typically involves a two-phase process to ascertain patterns in data. In the first phase, a model is trained toward a particular objective, such as detecting spam emails, through exposure to many examples. In the second phase, the model is shown a new example and must infer its category. So-called deep learning is a subset of M.L. that approaches classification problems layer by layer, with each layer devoted to an aspect of the classification. Thus, a deep learning system trying…