Member-only story

Four Questions About Regulating Online Hate Speech

Determining the standards for online hate speech and disinformation

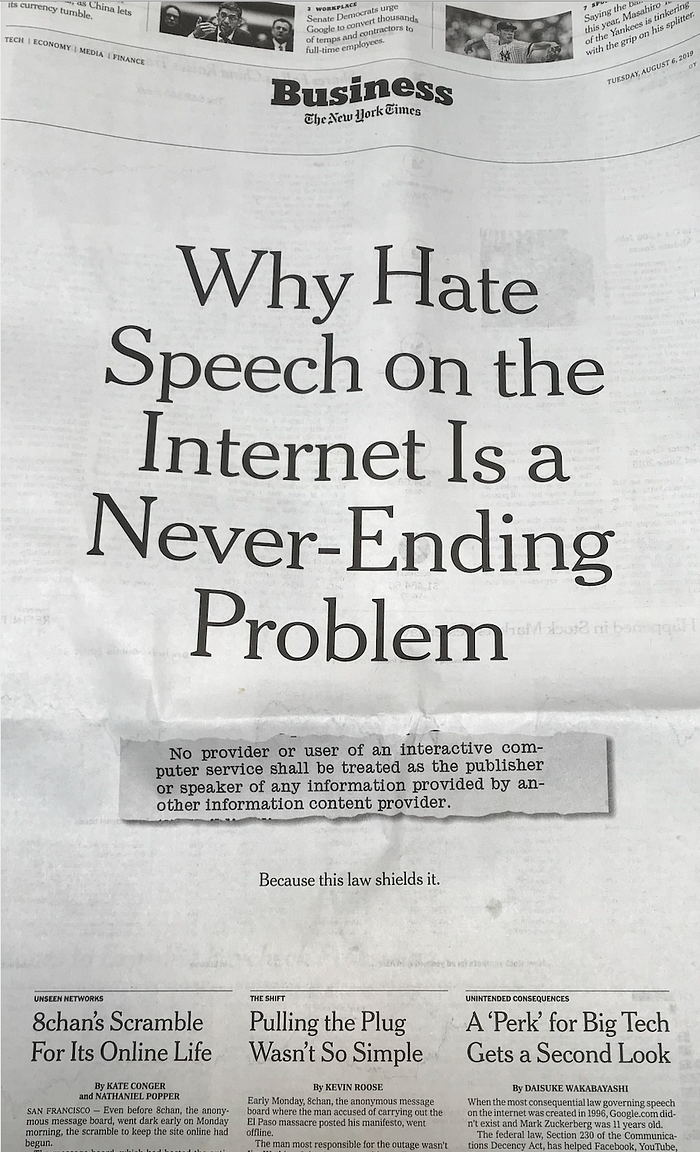

On August 6, 2019, the New York Times devoted the front page of its business section to this screaming headline and three articles that followed it:

The three articles involve some careful and important reporting. Two of them capture an underreported feature of online content issues, namely that internet companies, other than social media firms, have a role in regulating what we might call “dangerous speech” found on platforms like 8chan. A company like Cloudflare, which provides network security for millions of sites, can operate as a gateway for internet access, just as internet service providers, telecommunications companies, website hosting services, mobile device manufacturers, app stores, and many others do (see my report to the UN in 2017). Matthew Prince, the CEO of Cloudflare, has suggested that content moderation is a responsibility he does not want to have — he does not want Cloudflare to become an internet censor. (See this great piece from Evelyn Douek discussing Prince’s position and the issues at stake.)

I’m sorry, Times readers; if you were looking for an easy fix to online hate, this isn’t it.

But that headline.

Does hate speech persist online because Section 230 of the Communications Decency Act “shields it”? I’m sorry, Times readers — if you were looking for an easy fix to online hate, this isn’t it.

Look, there is a real debate to have over Section 230’s role in what so many see as the cesspool of hatred that we see online. The Times quotes Professor Danielle Citron in one of the three articles who says we may be in for a “moment of reexamination” of Section 230 and other online norms (and I commend to you her scholarship addressing online hate and harassment). But “hate speech” does not persist online because of some magical Section 230 shield. For the most concise overview, I strongly encourage checking out Daphne Keller’s piece in the Washington Post…