Member-only story

An A.I. Pioneer Wants an FDA for Facial Recognition

Erik Learned-Miller helped create one of the most important face datasets in the world. Now he wants to regulate his creation.

Erik Learned-Miller is one reason we talk about facial recognition at all.

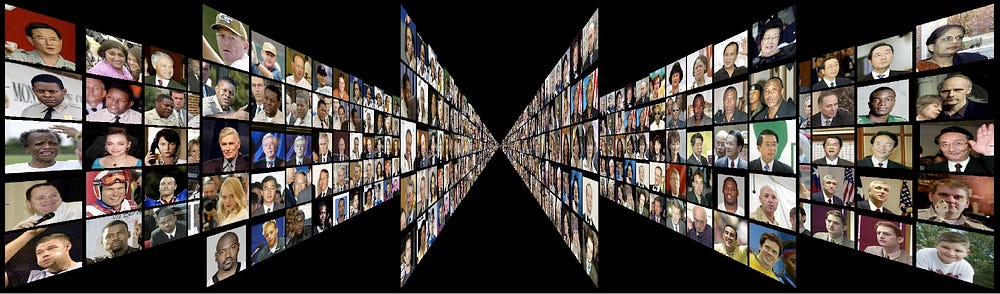

In 2007, years before the current A.I. boom made “deep learning” and “neural networks” common phrases in Silicon Valley, Learned-Miller and three colleagues at the University of Massachusetts Amherst released a dataset of faces titled Labelled Faces in the Wild.

To you or me, Labelled Faces in the Wild just looks like folders of unremarkable images. You can download them and look for yourself. There’s a picture of Alec Baldwin pointing at someone off camera. There’s Halle Berry smiling at the Oscars. There’s boxer Joe Gatti, gloves raised mid-fight. But to an artificial intelligence algorithm, these folders contain the essence of what it means to look like a human.

This is why Labelled Faces in the Wild, often abbreviated as LFW, is so important. It’s cited in some of the most impactful facial recognition research in the past decade. When Google and Facebook were competing in 2014 and 2015 on facial recognition accuracy, the common test was performance categorizing LFW images, which exist in an unchanging database. LFW has been cited nearly 3,500 times, by researchers at Microsoft and Stanford, computer scientists in China and Hong Kong, and by Geoff Hinton, the guy responsible for neural networks in the first place.

It’s a big deal.

But now that artificial intelligence is also big business, Learned-Miller is thinking about ways to rein in the technology. His prevailing idea right now: Regulate A.I. the way the FDA regulates the medical device industry. He doesn’t have an official name for it yet, but one idea he’s mulling: the FDA 2: the Facial recognition and Detection Agency.

Learned-Miller is thinking about ways to rein in the technology. His prevailing idea right now: Regulate A.I. the way the FDA regulates the medical device industry.