Member-only story

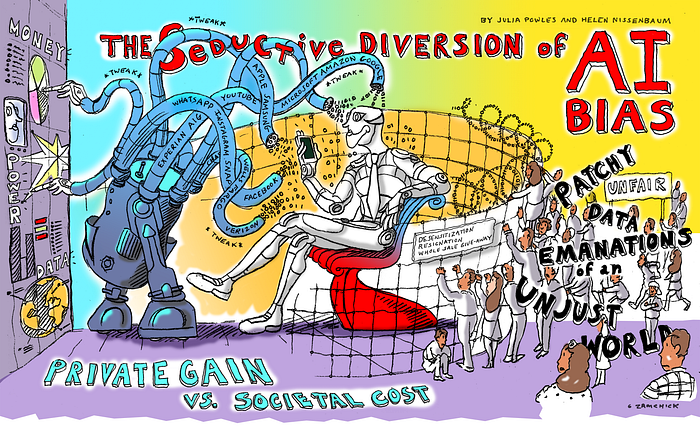

The Seductive Diversion of ‘Solving’ Bias in Artificial Intelligence

Trying to “fix” A.I. distracts from the more urgent questions about the technology

Co-authored with Helen Nissenbaum

The rise of Apple, Amazon, Alphabet, Microsoft, and Facebook as the world’s most valuable companies has been accompanied by two linked narratives about technology. One is about artificial intelligence — the golden promise and hard sell of these companies. A.I. is presented as a potent, pervasive, unstoppable force to solve our biggest problems, even though it’s essentially just about finding patterns in vast quantities of data. The second story is that A.I. has a problem: bias.

The tales of bias are legion: online ads that show men higher-paying jobs; delivery services that skip poor neighborhoods; facial recognition systems that fail people of color; recruitment tools that invisibly filter out women. A problematic self-righteousness surrounds these reports: Through quantification, of course we see the world we already inhabit. Yet each time, there is a sense of shock and awe and a detachment from affected communities in the discovery that systems driven by data about our world replicate and amplify racial, gender, and class inequality.

Serious thinkers in academia and business have swarmed to the A.I. bias problem, eager to tweak and improve the data and algorithms that drive artificial intelligence. They’ve latched onto fairness as the objective, obsessing over competing constructs of the term that can be rendered in measurable, mathematical form. If the hunt for a science of computational fairness was restricted to engineers, it would be one thing. But given our contemporary exaltation and deference to technologists, it has limited the entire imagination of ethics, law, and the media as well.

There are three problems with this focus on A.I. bias. The first is that addressing bias as a computational problem obscures its root causes. Bias is a social problem, and seeking to solve it within the logic of automation is always going to be inadequate.

Second, even apparent success in tackling bias can have perverse consequences. Take the example of a facial recognition system that works poorly on women of color because…